Hi! If you like this piece and want to support my work, please subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5000 to 185,000 words, including vast, extremely detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. I just put out a massive Hater’s Guide To Private Equity and one about both Oracle and Microsoft in the last month.

I am regularly several steps ahead in my coverage, and you get an absolute ton of value, several books’ worth of content a year in fact!. In the bottom right hand corner of your screen you’ll see a red circle — click that and select either monthly or annual. Next year I expect to expand to other areas too. It’ll be great. You’re gonna love it.

Before we go any further: no, this is not going to turn into a geopolitics blog. That being said, it’s important to understand the effect of the war in Iran on everything I’ve been discussing.

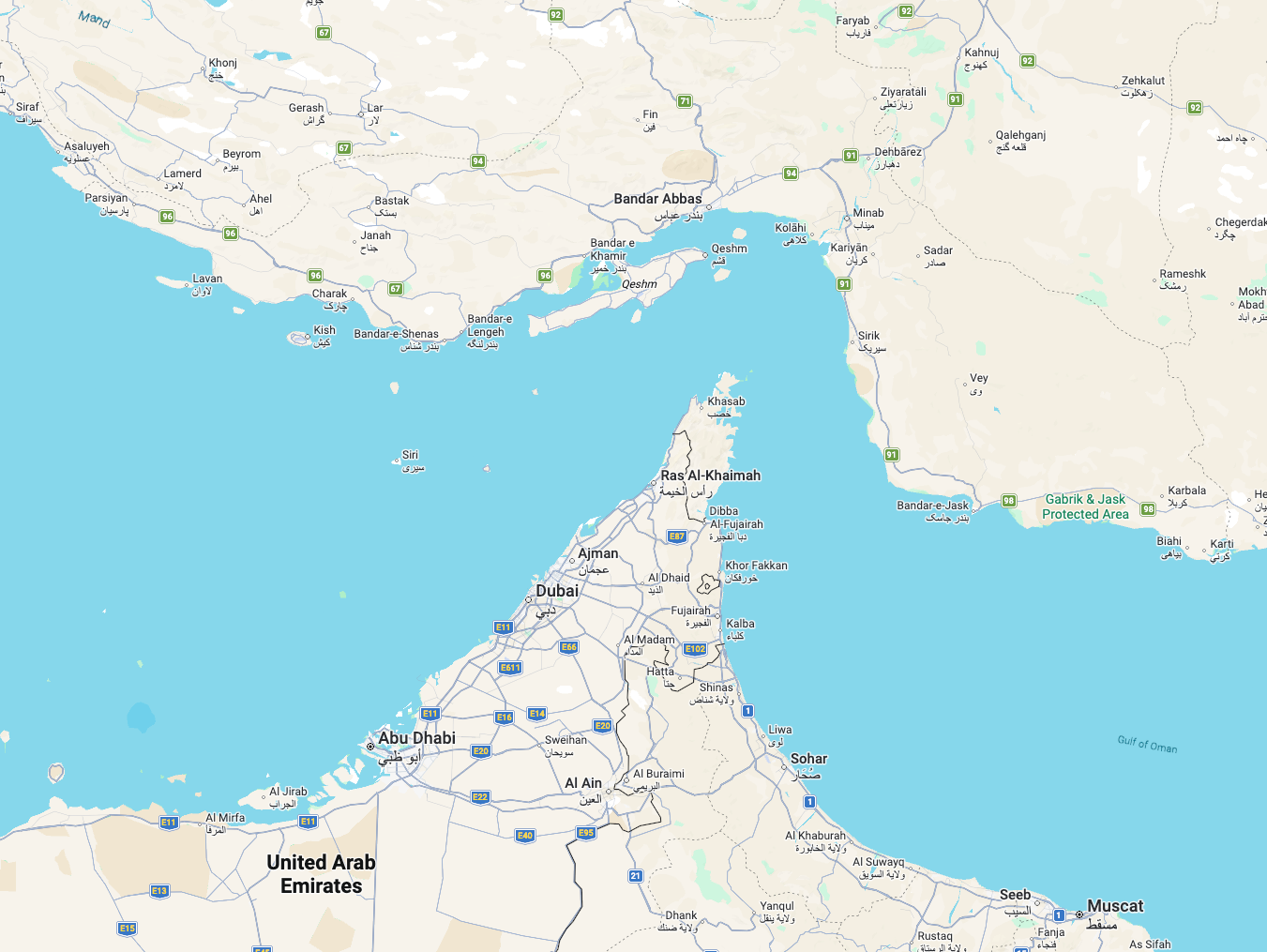

So, let’s start simple. Open Google Maps. Scroll to the Middle East. Look at the bit of water separating the Gulf Arab countries from Iran. That’s the Persian Gulf.

Scroll down a bit. Do you see the narrow channel between the United Arab Emirates and Iran? That’s the Strait of Hormuz. At its narrowest point, it measures 24 miles across. Around 20% of the world’s oil and a similar percentage of the world’s liquified natural gas (LNG) flows through it each year.

Yes, that natural gas, the natural gas being used to power data centers like OpenAI and Oracle’s “Stargate” Abilene (which I’ll get to in a bit) and Musk’s Colossus data center.

But really, size is misleading. Oil and gas tankers are massive, and they’re full to the brim with incredibly toxic material. Spills are, obviously, bad. Also, because of their size, these tankers need to stick where to where the water is a specific depth, lest they find themselves stuck.

As a result, there are two lanes that tankers use when navigating through the Strait of Hormuz — one going on, one going out. This a sensible idea with the goal to reduce the risk of collisions, but it also means that the potential chokepoint is even smaller.

Anyway, at the end of last month, Iran’s Revolutionary Guard Corps unilaterally closed off the strait, warning merchant shipping that any attempt to travel through the strait was “not allowed.”

This closure, for what it’s worth, is not legally binding. Iran can’t unilaterally close a stretch of international waters. And yes, while some of those shipping lanes cross through Iran’s territorial waters (and Oman’s, for that matter), they’re still governed by the UN Convention on the Law of the Sea (UNCLOSS), which gives ships the right to cross through narrow geographical chokeholds where part of the waters belong to another state, and that says that nations “shall not hamper transit passage.” That requirement, I add, cannot be suspended.

Still, merchant captains don’t want to risk getting themselves and their crews blown up, or arrested and thrown in Evin Prison. Insurers don’t want to pay for any ship that gets blown up, or indeed, for the ensuing environmental catastrophe. And the UAE doesn’t want its pristine beaches covered in crude oil.

And so, the tankers are staying put. And they’ll stay there until one of four things happens:

- Iran rescinds its ban on travel through the strait.

- The security situation improves (either because Iran’s ability to attack shipping becomes sufficiently degraded, or because the Gulf countries, or perhaps their Western allies, feel sufficiently confident that they can safely escort ships through the strait).

- The current Iranian government is overthrown and the conflict ends.

- Both sides reach an agreement and we return to the status quo.

Of the first three, none feels particularly likely, at least in the short-to-medium term. Maybe I’m wrong. Maybe everything reverses and everyone suddenly works it out — Trump realizes that he’s touching the stove and pulls out after claiming a “successful operation.” The world is chaotic and predicting it is difficult.

Nevertheless, before that happens, closing the Strait of Hormuz means that Iran can inflict pain on American consumers at the pump, and we’ve already seen a 30% overnight spike in oil prices, with the price of a barrel jumping over $100 for the first time since 2022 (though as of writing this sentence it’s around $95). With midterms on the horizon, Iran hopes that it can translate this consumer pain to political pain for Donald Trump at the ballot box.

This is all especially nasty when you consider that the price of oil is directly tied to inflation. It influences shipping costs, a lot of medicines, construction materials, and consumer objects have petrochemical inputs. In very simple terms, if oil is used to make your stuff (or get it to you), that stuff goes up in price.

While this obviously hurts countries with which Iran has previously had cordial relations, (particularly Qatar which is a major exporter of LNG), I genuinely don’t think it cares any more.

I mean, Iran has launched drones and missiles at targets located within Qatar’s territory, resulting in (at the latest count) 16 civilian injuries. Qatar shot down a couple of Iranian jets last week. I’m not sure what pressure any of the Gulf countries could exert on Iran to make it back down.

I don’t see the security situation improving, either. Iran’s Shahed drones are cheap and fairly easy to manufacture, and developed under some of the most punishing sanctions, when the country was cut off from the global supply chain. It then licensed the design to Russia, another heavily-sanctioned country, which has employed them to devastating effect in Ukraine.

Iran can produce these in bulk, and then — for the fraction of a cost of an American tomahawk missile — send them out as a swarm to hit passing ships. Even without the ability to produce new ones, Iran is believed to have possessed a pre-war stockpile of tens of thousands of Shahed drones.

Shaheds aren’t complicated, or expensive, or flashy, or even remotely sophisticated, and that’s what makes them such a threat. It took Ukraine a long time to effectively figure out how to counter them, and it’s done so by using a whole bunch of different tactics — from land-based defenses like the German-made Gepard anti-aircraft gun, to interceptor drones, to repurposed 1960’s agricultural planes, to (quite literally) people shooting them down with assault rifles from the passenger seat of a propeller-powered planes.

Ukraine has the experience in combating these drones, and even still some manage to slip through its defences, often hitting civilian infrastructure.

Airstrikes can probably reduce the threat to shipping (though not without exacting an inevitable and horrible civilian cost), but they can’t eliminate it.

Hell, even the Houthis — despite only controlling a small portion of Yemen, and despite efforts by a coalition of nations to degrade its offensive capabilities — still pose a risk to maritime traffic heading towards the Suez Canal.

Given the cargo these ships carry, any risk is probably too much risk for the insurers, for the carriers, and for the neighbouring countries. While I could imagine the US, at some point, saying “great news! It’s fine to go through the Strait of Hormuz now,” and though it has started offering US government-backed reinsurance for vessels, I don’t know if any shippers will actually believe it or take advantage of it.

And so, we get to the last point on my list. Regime change.

Do I believe that the Iranian government is deeply unpopular with its own people? Yes. Do I believe that said government can be overthrown by airstrikes alone? No. Do I believe that Iran’s government will do anything within its power to remain in control, even if that means slaughtering tens of thousands of their own people? Yes.

Even if there was an uprising, who would lead it? Iran’s virtually cut off from the Internet, and movement within the country is restricted, making it hard for any opposition figures to organize. The two most high-profile outside opposition figures — Reza Pahlavi, the son of the former Shah, and Maryam Rajavi, leader of the MEK and NCRI — both have their own baggage, and they’re living in the US and France respectively.

As I said previously, this isn’t me wading into geopolitics, but more of a statement that there’s no way of knowing when things will eventually return to normal. This conflict might wrap up in a couple of weeks, or it might be months, or, even longer than that.

All this amounts to a huge amount of global oil production being bottled up, which is made worse by the fact that there’s also the slight problem that Iran produces a lot of oil itself, sending most of it (over 80%) to China. With Iran unable to export crude, and its production facilities now under attack, China’s going to have to look elsewhere. Which will result in even higher oil prices.

Which, in turn, will make everything else more expensive.

The Energy Crisis Hits The AI Bubble

That is what brings us back to the AI bubble.

Now, given that most of the high-profile data center projects you’ve heard about are based in the US, which is (as mentioned) largely self-sufficient when it comes to hydrocarbons, you’d assume that it would be business as usual.

And you would be wrong.

You see, this is a global market. Prices can (and will!) go up in the US, even if the US doesn’t import oil or natural gas from abroad, because that’s just how this shit works. Sure, there are variations in cost where geography or politics play a role, but everyone will be on the same price trajectory.

While we won’t see the same kind of shortages that we witnessed during the last oil shock (the one which ended up taking down the Carter presidency), it will still hurt. While the US managed to decouple itself from oil imports, it hasn’t (and probably can’t) decouple itself from global pricing dynamics.

The US has faced a few major oil shocks — the first in 1973, after OPEC issued an embargo against the US following the Yom Kippur War, which ended the following year after Saudi Arabia broke ranks, and the second in 1979, following the Iranian Revolution — and both hurt…a lot.

This won’t be much different.

First, inflation. As the cost of living spikes, people will start demanding higher wages, which will, in turn, be passed down through higher prices.

At least, that’s what would normally happen. Paul Krugman, the Nobel-winning economist, wrote in his latest substack that US workers in the 1970s were often unionized, and they benefited from contractual cost-of-living increases in their work contracts.

Sadly, we live in 2026. Union membership hasn’t recovered from the dismal Reagan years, and with layoffs and offshoring, combined with an already tough jobs market, workers have little leverage to demand raises. We’re in an economy oriented around do-nothing bosses that loathe their workers, one where workers will get squeezed even further by the consequences of any economic panic, even if it’s one caused by multiple events completely out of their control.

So, it’s unlikely that we’ll see a wage-based amplification of any inflation that comes from the current situation.

That said, depending on how bad things get, we will see inflation spike, and Increases in inflation are usually met with changes in monetary policy, with central banks raising the cost of borrowing in an attempt to “cool” the economy (IE: reduce consumer spending so that companies are forced to bring down prices).

And we’d just started to bring down interest rates, with the Fed announcing in December that it projected rates of 3.4% by the end of 2026.

Iran changes that in the most obvious way possible — if prices soar, interest rates may follow, and if rates go up, even by a point or two of a percentage, financing the tens and hundreds of billions of dollars in borrowing that the AI bubble demands will become significantly more expensive.

For some context, the International Monetary Fund’s Kristalina Georgieva recently said “...a 10% increase in energy prices that persists for a year would push up global inflation by 40 basis points and slow global economic growth by 0.1-0.2%,” per The Guardian, who also added…

Some economists argue that a jump in the price of energy and transport costs, significant though they are for households and businesses, could prove to be a sideshow if the bombing of Iran by the US and Israel destabilises financial markets already worried about ballooning AI stocks and the impact of US import tariffs.

And remember: the AI bubble, along with the massive private equity and credit funds backing it, is fueled almost entirely by debt. All this chaos and potential for jumps in inflation will also affect the affordability calculations that lenders will make before loaning the likes of Oracle and Meta the money they need at a time when lenders are already turning their nose up at Blue Owl-backed data center debt deals.

The alternative is, of course, not raising interest rates — which, if the Fed loses its independence, is a possibility — which would be equally catastrophic, as we saw in the case of Turkey, whose president, Recep Tayyip Erdogan, has a somewhat… ahem… “unorthodox approach to monetary policy.

Erdogan believes that high interest rates cause inflation — a theory which he tested to the detriment of his own people. In simpler terms, Turkey has faced some of the worst hyperinflation in the developed world, and has a currency that lost nearly 90% of its value in five years.

The Consequences of Building Everything On Debt

It’s not just the data centers, either. As interest rates go up, VC funds tend to shrink, because the investors that back said funds can get better returns elsewhere, and with much less risk.

As I discussed in the Hater’s Guide to Private Equity, 14% of large banks’ total loan commitments go to private equity, private credit and other non-banking institutions, at a time when (to quote Forbes) PE firms are taking an average of 23 months fundraising (up from 16 months in 2021), after private credit’s corporate borrowers’ default rates (as in the loans written off as unpaid by the borrow) hit 9.2% in 2025.

Put really simply, private equity, private credit, venture capital and basically everything to do with technology currently depends on the near-perpetual availability of debt. The growth of private credit is so recent that we truly don’t know what happens if the debt spigot gets turned off, but I do not think it will be pretty.

Things get a little worse when you remember that famed business dipshits SoftBank are currently trying to raise a $40 billion loan to fund its three $10 billion Klarna-esque payments as part of its $30 billion investment in OpenAI’s not-actually-$110-billion-yet funding round. How SoftBank — a company that raised a $15 billion bridge loan due to be paid off in around four months and has about $41.5 billion in existing debt that’s maturing that needs to be refinanced in the next nine months or so, per JustDario — intends to take on another $40 billion is beyond me. And that’s a sentence I would’ve written before the war in Iran began.

There’s also evidence that links lower IPO numbers to rising inflation rates, which means that achieving the exit that investors want will become so much harder — and so, they might as well not bother. Need proof? SoftBank-owned mobile payments company PayPay delayed its IPO last week, and I quote Reuters, because “...markets were rattled by [the attack] on Iran, according to two people familiar with the matter.”

Inflation also negatively affects company valuations — which, again, will influence whether investors open their purse strings.

This is all a long-winded way of saying that the AI industry is about to enter a world of hurt. Every AI startup is unprofitable, which means they need to raise money from venture capitalists, who raise money from investors that aren’t paying them, pension funds and insurers, and private equity and credit firms that raise money from banks, both of which will struggle should central bank rates spike.

The infrastructural layer — AI data centers — also requires endless debt (due to the massive upfront costs for NVIDIA chips and construction), and that debt was already becoming difficult to raise.

Then there's the practical opex and capex costs. Higher interest rates mean that any contractors building the facilities will insist on higher fees, because their costs — labor costs, the price of filling up a van or a truck with gas, or paying for building materials — has gone up. And they’ll probably pad the increase a bit to take into account for any future rises in inflation.

Those gas turbines you’re running to power your facility? Yeah, feeding those is going to get much more expensive. Natural gas is up as much as 50%, and a lot of US capacity is going to serve markets in Asia and Europe to take advantage of the spike in prices, which will mean an increase in prices for US consumers.

In fact, you don’t even need interest rates to spike for things to get nasty. As the price of oil continues to skyrocket, flying a Boeing 747 filled with GB200 racks from Taiwan to Texas or mobilizing the thousands of people that work (to quote Bloomberg) day and night to build Stargate Abilene will become extra-normally more expensive.

And even in the very, very unlikely event that things somehow quickly return to whatever level of “normal” you’d call the world before the conflict started, even brief shocks to the financial plumbing are enough to destabilize an already-fractured hype cycle.

The Beginning of History

Last week, Bloomberg reported something I’d already confirmed three weeks ago — that OpenAI was no longer part of the planned expansion (past the initial two (of eight) buildings) of Stargate Abilene, a project that’s already massively delayed from its supposed “full energization” by mid-2026.

Oracle disputes the report (and if it’s wrong, I imagine investors will rightly sue) claiming that “Crusoe [the developer] and Oracle are “operating in lockstep,” which doesn’t make sense considering the delays or, well, reality.

My sources in Abilene also tell me that the expansion fell apart due to Oracle’s dissatisfaction with the revenue it was making on buildings one and two, and that a bidding war was taking place between Meta and Google for the future capacity.

Bloomberg’s Ed Ludlow also reports that NVIDIA put down a $150 million deposit as Crusoe attempts to lock down Meta as a tenant — a very strange thing to do considering Meta is flush with cash, suggesting a desperation in the hearts of everybody involved. It’s also very, very strange to have a supplier get involved in a discussion between a vendor and a customer, almost as if there’s some sort of circular financing going on.

As I reported back in October, Stargate currently only has around 200MW of power, and The Information reports that power won’t be available for a year or more, something I also said in October.

As self-serving as it sounds, I really do recommend you read my premium piece about the AI Bubble’s Impossible Promises, because I laid out there how stupid and impossible gigawatt data centers were before the war in Iran. We’ve already got a shortage in the electrical grade steel and transformers required to expand America’s (and the world’s) power grid, we’ve already got a shortage of skilled labor required to build that power (and data centers in general), and we’re moving massive amounts of heavy shit around a large patch of land using thousands of people, which will cost a lot of gas.

I don’t know why, but the media and the markets seem incapable of imagining a world where none of this stuff happens, clinging to previous epochs where “things worked out” and where “things were okay” without a second thought.

In The Black Swan, Nassim Taleb makes the point that “…the process of having [journalists] report in lockstep [causes] the dimensionality of the opinion set to shrink considerably,” saying that they tend to “[converge] on opinions and [use] the same items as causes.”

In simpler terms, everybody reporting the same thing in the same way naturally makes everybody converge on the same kinds of ideas — that AI is going to be a success because previous eras have “worked out,” even if they can’t really express what “worked out” means.

The logic is almost childlike — in the past, lots of money was invested in stuff that didn’t work out, but because some things worked out after spending lots of money, spending lots of money will work out here.

The natural result is that reporters (and bloggers) seek endless positive confirmation, and build narratives to match. They report that Anthropic hit $19 billion in annualized revenue and OpenAI hit $25 billion in annualized revenue — which has been confirmed to refer to a 4-week-long period of revenue multiplied by 12 — as proof that the AI bubble is real, ignoring the fact that both companies lose billions of dollars and that my own reporting says that OpenAI made billions less and spent billions more in 2025. They assume that a company would not tell everybody something untrue or impossible, because accepting that companies do this undermines the structure of how reporting takes place, and means that reporters have to accept that they, in some cases, are used by companies to peddle information with the intent of deception.

And thanks to an affidavit from Anthropic Chief Financial Officer Krishna Rao filed as part of Anthropic’s suit against the Department of Defense’s supply chain risk designation, it’s clear that the deception was intentional, as the affidavit confirmed that Anthropic’s lifetime revenue “to date” (referring to March 9th 2026) is $5 billion, and it has spent $10 billion on inference and training.

Anthropic’s CFO Said It Made $5 Billion in Lifetime Revenues — But When You Add Up The Annualized Revenues Reported, They’re In Excess of $6 Billion, Suggesting Reporters Are Being Misled

To be abundantly clear, this means that Anthropic’s previous statement that it made $14 billion in annualized revenue (stated by Anthropic on February 12 2026, and referring, I’ve confirmed, to a month-long period multiplied by 12) — referring to a period of 30 days where it made $1.16 billion — accounts for more than 23% of its lifetime revenue.

This comes down to which Anthropic you believe, because these two statements do not match up. I am not stating that it is lying, but I do believe annualized revenue is a deliberate attempt to obfuscate things and give the vibe that the business is healthier than it is. I also do not think it’s likely that Anthropic made 23% of its lifetime revenue in the space of a month.

What this almost certainly means is that the sources that told media outlets that Anthropic made $4.5 billion in 2025 were misleading them. The exact quote from the affidavit is that “...[Anthropic] has generated substantial revenue since entering the commercial market—exceeding $5 billion to date,” and while boosters will say “uhm, it says “exceeding,” if it were anything higher than $5.5 billion Anthropic would’ve absolutely said so.

We can also do some very simple maths that suggests that Anthropic’s “annualized” figures are…questionable. On February 12 2026, annualized revenue hit $14 billion. Five days before the lawsuit was filed, it was $19 billion, “with $6 billion added in February” (per Dario Amodei at a Morgan Stanley conference), suggesting that annualized revenue was $13 billion, or $1.083 billion.

Even if we assume a flat billion, that means that Anthropic made $2.16 billion between January and the end of February 2026. And that’s not including the revenue made in March so far.

But I’m a curious little critter and went ahead and added up all of the times that Anthropic had talked about its annualized revenue from 2025 onward, and the results — which you can find with links here! — and based on my calculations, just using published annualized revenues gets us to $4.837 billion.

We are, however, missing several periods of time, which I’ve used “safe” (as in lower, so that I am trying to give Anthropic the benefit of the doubt) numbers to calculate based on the periods themselves.

- April 1 to 30, 2025, which I estimate as $166 million based on reports of Anthropic’s annualized revenue being $2 billion at the end of March 2025.

- August 1 to August 20, 2025, which I estimate as $271 million based on July 2025’s revenues ($4 billion).

- November 1 to November 29, 2025, which I estimate as $556 million, based on October’s $7 billion in annualized revenues.

- January 1 to January 11, 2026, which I estimate as $219.1 million, assuming $9 billion in annualized revenue (based on reported December revenues).

With these estimates, we get a grand total of $6.66 billion (ominous!), which is a great deal higher than $5 billion. When you remove the estimates and annualized revenues for 2026, you get $3.642 billion, which heavily suggests that Anthropic did not, in fact, make $4.5 billion in 2025.

Sidenote: there’s absolutely some waviness in the definition of what the actual revenue is for a period. ARR is meant to refer to a month's snapshot. That’s what I’m doing here. If Anthropic does anything strange (such as annualizing based on a week of revenue), I have no control over that, but to be clear, reports have said “month.”

There isn’t a chance in Hell this company made $4.5 billion in 2025 based on its own CFO’s affidavit. I also think it’s reasonable to doubt the veracity of these annualized revenues, or, in my kindest estimation, that Anthropic is using any kind of standard “annualized” formula.

Here are the ways in which people will try and claim I’m wrong:

- “Ed, it’s commercial revenue!” — this is all revenue. Anthropic doesn’t have “non-commercial revenue,” unless you are going to use a very, very broad version of what “non-commercial” means, at which point you have to tell me why you trust Anthropic.

- “This doesn’t include all the revenue up until March 2026! Maybe this suit was written weeks ago!” — even if it doesn’t, based on Anthropic’s own numbers, things don’t line up. Also, this was written specifically as part of the lawsuit with the DoD. It’s recent.

- “It says “exceeding”! — it also says “over $10 billion in inference and training costs.” Can I just say whatever number I want here? Because if this is your argument that’s what you’re doing.

- “That $5 billion number is accurate!” — the only way this makes sense is if some or all of these annualized revenues are incorrect.

I think it’s reasonable to doubt whether Anthropic made anywhere near $4.5 billion in 2025, whether Anthropic has annualized revenues even approaching those reported, and whether anything it says can be trusted going forward.

It appears one of the most prominent startups in the valley has misled everybody about how much it makes, or if it has not, that somebody else is perpetuating a misinformation campaign. Add together the annualized revenues. Look at the links. Do the maths. I got the links for annualized revenues from Epoch AI, though I have seen all of these before in my own research.

People are going to try and justify why this isn’t a problem in all manner of ways. They’ll say that actually Anthropic made less money in 2025 but that’s fine because they all could see what annualized revenues really meant. So far, nobody has a cogent response, likely because there isn’t one.

I haven’t even addressed the $10 billion in training and inference costs, because good lord, those costs are stinky, and based on my own reporting — which did not come from Anthropic, which is why I trust it! — Anthropic spent $2.66 billion on Amazon Web Services from January through September 2025, or around 26% of its lifetime compute spend. That’s remarkable, and suggests this company’s compute spend is absolutely out of control.

This leads me to one more quote from Anthropic’s CFO:

We also have funded substantial critical technology infrastructure (“compute”) purchases via long-term financing arrangements, where it is critical that our counterparties believe that Anthropic can and will repay or otherwise fulfill its financial obligations.

Without attempting to influence their decision making, if I were a counterparty to a company like this, my biggest concern would now be that this filing appears to suggest that Anthropic’s revenues are materially smaller than I believed.

Though it might seem dangerous to be like me, pointing at stuff and saying “that doesn’t make sense!” Or questioning a narrative held by the entire stock market and most of modern journalism, but I’d argue the danger is that narrow, narrative-led, establishment-driven thinking makes it impossible for reporters to report.

While you might be able to say “a source told me that something went wrong,” the natural drive to report on what everybody else is saying means that this information is often reported with careful weasel words like “still going as planned” or “still growing incredibly fast.” It’s a kind of post-factual decorum — a need to keep the peace that frames bad signs as bumps in the road and good signs as cast-iron affirmations of future success.

This is a catastrophic failure of journalism that deprives retail investors and the general public of useful information. It also — though it feels as if reporters are “getting scoops” or “breaking news” — naturally magnetizes journalists toward information that confirms the narrative, or “leaks” that are actually the company intentionally getting something in front of a reporter so that they (the reporter) can appear as if this was “investigative news” versus “marketing in a different hat.”

It also means that modern journalism is ill-equipped, and no, this is not a “new” phenomena. It is the same thing that led to the dot com bubble, the NFT bubble, the crypto bubble, the Clubhouse bubble, the AR and VR bubble, and many more bubbles to come.

To avoid being “wrong,” reporters are pursuing stories that prove somebody else right, which almost invariably ends with the reporter being wrong. “Pursuing stories to prove somebody else right” means that a great many reporters (and newsletter writers) that claim to be objective and fact-focused end up writing the narrative that companies use to raise money using evidence manufactured by the company in question.

In some cases, this is an act of cowardice. Following the narrative because it’s easy and because everybody’s doing it adds a layer of reputation laundering. If everybody failed, everybody was conned and thus nobody has to be held accountable, and because there really has never been any accountability for the media being wrong about any previous bubbles, the assumption is that it’ll never happen.

However you may feel about my work or what I’m saying, I need you to understand something: journalism, both historically and currently, is unprepared for the consequences of being wrong.

The current media consensus around the AI bubble is that even if it pops it will be fine, with some even saying that “even if OpenAI folds, everything will work out, because of the dot com bubble.” This is a natural attempt to rationalize and normalize the chaotic and destructive — an attempt to map how this bubble would burst onto previous bubbles because new things are difficult and scary to imagine.

There has never been a time when the entire market crystallised around a few specific companies — not even the dot com bubble! — and then built an entire infrastructural layer mostly in service of two of them, with a price tag now leering close to the $1tn mark.

Let’s get specific. The scoffing and jeering I get from people when I say that AI demand doesn’t exist or that AI companies don’t have revenues or that OpenAI or Anthropic are unsustainable is never met with a good faith response, just quotes about how “Amazon Web Services lost lots of money” or “Uber lost lots of money” or that “these are the fastest growing companies of all time” or something about “all code being written by AI,” a subject I discussed at length two weeks ago.

The Large Language Model era is uniquely built to exploit human beings’ belief that we can infer the future based on the past, both in how it processes data and in how people report on its abilities. It exploits media outlets that do not have people that are given the time (or held to a standard where they have) to actually learn the subjects in question, and sells itself based on the statement that “this is the worst it’ll ever be” and “previous eras of investment worked out.”

LLMs also naturally cater to those who are willing to accept substandard explanations and puddle-deep domain expertise. The slightest sign that Claude Code can build an app — whether it’s capable of actually doing so or not — is enough for people that are on television every day to say that it will build all software, because it confirms the biases that the cycle of innovation and incumbent disruption still exists, even if it hasn’t for quite some time. A glossy report about job displacement — even one that literally says that Anthropic found “no systematic increase in job displacement in unemployment” from AI — gets reported as proof that jobs are being displaced by AI because it says “AI is far from reaching its theoretical capability: actual coverage remains a fraction of what’s feasible.”

This is an aggressive exploitation in how willing people with the responsibility to tell the truth are willing to accept half-assed expectations, and how willing people are to operate based on principles garnered from the lightest intellectual lifts in the world.

The assumption is always the same: that what has happened before will happen again, even if the actuality of history doesn’t really reflect that at all. Society — the media, politicians, chief executives, shit, everyone on some level — is incapable of thinking of new stuff that would happen, especially if that new stuff would be economically destructive, such as a massive scar across all private credit, private equity and venture capital, one so severe that it may potentially destroy the way that businesses (and startups, for that matter) raise capital for the foreseeable future.

People are more willing to come up with societally-destructive theories — such as all software engineering and all journalism and all content being created by LLMs, even if it doesn’t actually make sense — because it fits their biases. Perhaps they’re beaten down by decades of muting the power of labor or the destruction of our environment. Perhaps they’re beaten down by the rise of the right and the destruction of the rights of minorities and people of colour.

Or more noxiously, perhaps they’re excited to be the one that called it first, so that the new overlords that they perceive will own this (fictional) future, so much so that they’ll ignore the underlying ridiculousness of the economics, refuse to do any further reading that might invalidate their beliefs, or simply say whatever they’re told because it gets clicks and makes their advertisers, bosses or friends happy.

People are willing to fall in line behind mythology because conceiving an entirely-different future is an intellectually challenging and emotionally draining act. It requires learning about a multitude of systems and interconnecting disciplines and being willing to admit, again and again, that you do not understand something and must learn more. There are plenty of people that are willing to do this, and plenty more that are not, and those are the people with TV shows and writing in the newspaper.

I believe we’re in a new era. It’s entirely different. Stop trying to say “but in the past,” because the past isn’t that useful, and it’s only useful if you’re capable of evaluating it critically, skeptically, and making sure that it’s actually the same rather than it feeling like it is.

I keep calling this era “The Beginning of History,” not because it directly reflects Francis Fukuyama’s theory (which relates to democracies), but because I believe that those who succeed in this world are not those who are desperate to neatly fit it into the historical failures or successes of the past, but are willing to stare at it with the cold, hard fury of the present.

There are many signs that the past no longer makes sense. The collapse of SaaS (which I’ll cover in this week’s premium), the collapse of the business models of both venture capital and private equity, the collapse of democracies under the weight of fascism because the opposition parties never seem to give enough of a fuck about the experiences of regular people.

That’s because using the past to dictate what will happen in the future is masturbatory. It allows you to feel smart and say “I know the most about anything, which means I know what’s going on.” It is, much like an LLM, assuming that simply reading enough is what makes somebody smart, that shoving a bunch of text in your head — whether or not you understand it is immaterial — is what makes somebody know something or good at something.

It’s an intellectually bankrupt position that I believe will lead those unable to adapt to the reality of the future to destruction. It leads to lazy thinking that grasps at confirmations rather than any fundamental understanding, depriving the general public of good information in the favor of that which confirms the biases and wants and needs of the malignant and ignorant.

It takes courage to be willing to be wrong with deliberacy, but only if you admit that you were wrong. This hasn’t happened in previous bubbles, and it has to again for us to stop bubbles forming. I have made a great deal of effort to learn more as time goes on. I do not see boosters doing the same to prove their points. I will be pointing to this sentence in the future, one way or another.

So much more effort is put into humouring the ideas of the bubbles, of proving the marketing spiel of the bubbles, framed as a noxious “both-sides” that deprives the reader, listener or viewer of their connection with reality. It might be tempting to say this happens with cynicism too, except the majority of attention paid to bubbles is positive, and saying otherwise is a fucking lie.

Need to justify unprofitable, unsustainable AI companies? Uber lost money before. Need to explain why AI data centers being built for demand isn’t a problem? Well, the internet exists, and people eventually used that fiber.

You can ignore actual proof while pretending to provide your own, all just by pointing vaguely to things in the past. It takes actual courage to form an opinion, something boosters fundamentally lack.

I’m not saying it’s impossible to make predictions, but that the majority of people make them with flimsy information, such as “this thing happened before” or “everyone’s saying this will happen.” I’m not saying you can’t try and understand what will happen next, but doing so requires you to use information that is not, on its face, generated by wishcasting or events that took place decades ago.

In the end, the greatest lesson we can learn from is that, historically speaking, people tend to fuck around and then find out.

The assumption boosters make is that one can fuck around forever.

History tends to disagree.