Everyone, it’s time to talk about AI demand and the capacity constraint issues across the industry.

These constraints are not a result of “incredible demand” for AI, but the desperation of hyperscalers and the avariciousness of two near-trillion-dollar failsons living off their parents’ welfare. Just two weeks ago, both Amazon and Google pledged to invest up to another combined $65 billion in Anthropic, a company that just raised $30 billion in February and plans to raise another $50 billion more, following Amazon’s $15 billion (and as much as $35 billion more) investment in OpenAI in February.

This is not what you do when real, meaningful demand exists for AI services. Assuming that these rounds are closed at their higher limits, it will mean that Google has invested $43 billion and Amazon $33 billion in keeping Anthropic alive.

This also doesn’t make sense when you look at Anthropic’s own projections.

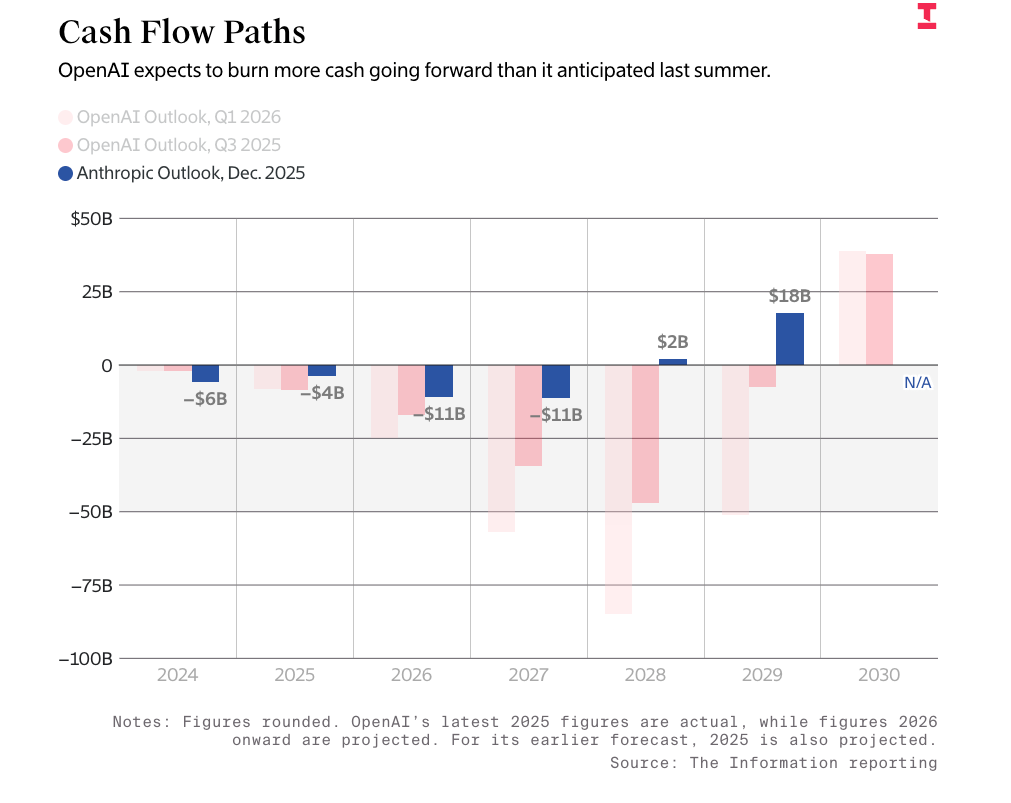

Per The Information, Anthropic believes it will become cash-flow-positive in the next two years after losing exactly $11 billion in both 2026 and 2027:

This only becomes more astonishing when you read that Anthropic intends to make $18 billion in 2026, $55 billion in 2027, $102 billion in 2028, and $148 billion in 2029. That’s revenue, not profit.

You may also be wondering how Anthropic goes from losing $11 billion two years running to making $2 billion in profit, and the answer is “nobody knows, including Anthropic.”

In any case, what Anthropic is saying in these projections is that it will lose $29 billion in 2026 and $66 billion in 2027. It’s also not clear what Anthropic’s actual costs will be in those years, because The Information decided it wasn’t necessary to include those. Thankfully, The Wall Street Journal did, suggesting that Anthropic intends to spend at least $86 billion on training costs alone through the end of 2029.

It’s become blatantly obvious that Google and Amazon are conspiring to keep one of their largest business lines alive, much like Microsoft funneled over $13 billion into OpenAI before allowing OpenAI to seek other compute providers when it slowed down its data center construction. While I think Satya Nadella is a verbose dullard, Microsoft CFO Amy Hood is clearly quite smart, and jumped at the opportunity to allow Oracle to mortgage its entire future on OpenAI and Sam Altman’s clammy little dreams. Hood has managed to disconnect Microsoft from OpenAI’s welfare system, and while it claimed it was investing in Anthropic last November and in its February 2026 funding round, its latest 10-Q only mentions Anthropic once — as part of the work “philanthropic” on page 59.

And now Microsoft has ended its exclusivity deal over OpenAI’s models, allowing Amazon to sell them too, but still retaining a revenue share of 20% from OpenAI’s sales, including from its partnership with Amazon, a few months after Amazon and OpenAI agreed a $138 billion eight-year-long deal that involved 2GW of capacity.

A gigawatt here, a gigawatt there, soon you’ll be making real money.

Except…nobody is making real money, and it appears that the vast majority of AI capacity and revenue is either going to OpenAI or Anthropic, and the rest is going to Microsoft, Google, and Amazon, who then spend that money on GPUs from NVIDIA or data centers to put them in.

What numbers we do have around AI revenues are extremely sad.

I estimate that 70% or more of Microsoft’s $37 billion in annual AI run rate comes from OpenAI’s estimated $24 billion in annualized compute spend on Azure, taking up more than 80% of Microsoft’s estimated 2GW of AI capacity. OpenAI, per its CFO, ended 2025 with 1.9GW of capacity, and 67% of CoreWeave’s revenue is Microsoft paying for OpenAI’s training compute.

Similarly, Amazon’s $15 billion in annualized AI revenue is taken up by an estimated $12 billion in annualized AWS spend from Anthropic, and I estimate that more than 80% of that is accounted for by my estimated $12 billion in annualized spend from Anthropic.

Today I’m pushing against the grain about as hard as anybody has in the AI bubble. I fundamentally believe that the AI demand story is nonsense — a mirage created by two companies that have only been successful as a result of having near-infinite resources provided to them by hyperscalers.

Google, Amazon, and Microsoft have spent a combined $803 billion in capex on the AI bubble so far, and OpenAI and Anthropic have raised (assuming their rounds fully close) over $252 billion.

Assuming the rounds close, these three hyperscalers have sunk a combined $78 billion in funding into OpenAI and Anthropic, all while building infrastructure almost entirely in their service, and signing deals with neoclouds to continue providing it.

The AI demand story is a lie, because the only way to create a company able to actually meet said demand is for a hyperscaler to fund it themselves.

Had Amazon not given it $8 billion and Google $3 billion in its earliest days, Anthropic would’ve never been able to grow to the scale that it could spend tens of billions of dollars a year on AWS and Google Cloud, nor would OpenAI have been able to do so without the earliest infusions of over $10 billion from Microsoft (of which the majority came in the form of Azure credits), and none of this would’ve been possible had hyperscalers not effectively pre-sold their own infrastructure to their own incubated companies.

There is little “AI demand” outside of hyperscalers funnelling themselves money. The AI data center capacity crunch is a result of how long it takes to build data centers — Microsoft, Google and Amazon had an early lead, experience, and massive amounts of cash to deploy in a way that nobody else could.

Sidenote: And still, even with their experience, there’s still the insurmountable reality that building large-scale, power-hungry data center facilities takes time, and there are problems that are so big, no amount of money can make them go away. Permitting, accessing energy, and just the simple reality of building a structure from scratch are hard.

The irony is that this should be obvious to any software developer — and by extension, software company — for whom Fred Brooks’s The Mythical Man Month is still, more than fifty years later, considered essential reading, and which argues that there are things that can’t be accelerated by simply throwing money and resources at it.

Then consider the fact that a lot of the compute in the pipeline is being built by companies without that much money, and that started life in the incredibly dodgy world of crypto mining, and thus don’t have much experience in building the kinds of AI data centers that OpenAI and Anthropic need.

That’s why you can’t find A) anybody who’s spending anywhere near as much on compute as OpenAI and Anthropic and B) anybody who’s managed to compete with them at any scale. Their existence is entirely subsidized, their success a mirage, and their compute spend effectively three companies feeding themselves money.

And despite all the crowing around “the insatiable demand for compute,” there doesn’t appear to be any evidence that anybody is spending that much on it outside of Anthropic and OpenAI.

If I were wrong, we’d see literally any other AI startup signing these massive compute contracts.

Coming Up On This Week’s Where’s Your Ed At Premium…

- Big Tech needs $3 trillion in new AI revenue by the end of 2030, or it’s wasted the majority of its capex.

- I estimate that Anthropic and OpenAI make up at least 85% of current and future AI compute spend, either through their own direct spending or hyperscalers like Google, Amazon or Microsoft renting capacity for them.

- Microsoft, Google and Amazon have built as much as 75% of their AI data center capacity to service two customers — OpenAI and Anthropic — putting the true cost of OpenAI and Anthropic, including total funding of $180 billion and $72 billion respectively, at at least $600 billion in combined infrastructure and equity investments.

- And, obviously, the vast majority of their funding going toward compute spend across these three companies.

- OpenAI and Anthropic cannot afford to pay their future compute commitments without hyperscaler and venture capital subsidies.

- Outside of Anthropic, OpenAI, Google (for OpenAI, Anthropic and Meta), Microsoft (for OpenAI and Anthropic), Amazon (for OpenAI, Anthropic and Meta), CoreWeave (for OpenAI, Anthropic, and Meta) and Meta, less than $1 billion of actual AI compute demand exists.

- In all honesty, I’ve struggled to find more than $500 million outside of Jane Street, which also funded CoreWeave.

- OpenAI and Anthropic’s compute spend and demands have created an illusion of demand, becoming a systemic weakness in CoreWeave, Nebius, IREN, and TeraWulf.

- Hyperscaler buildouts appear to be almost-entirely focused on either OpenAI or Anthropic, with little proof of their own services generating enough demand to fulfil them.

- There is not enough revenue to substantiate the existence of the in-progress data center construction, with over $157 billion in annual revenue required to monetize the 15.2GW (11.2GW critical IT) of data centers under construction to be finished by the end of 2027.

- Google is creating SPVs with investment firms to sell TPUs to itself, and has, via Broadcom, sold $63 billion in TPUs to Anthropic, which it will then bill for the compute, creating a circular financing system similar to NVIDIA’s.

- To support the estimated $800 billion in GPU sales that NVIDIA claims will come through by end of 2027, there needs to be 39.6GW of new data centers constructed (only 15.2GW of which are under construction), and around $383 billion in annual AI compute demand for an industry that — even with OpenAI and Anthropic’s spend — doesn’t even reach $70 billion in annual demand.